What does artificial intelligence look like?

Searching online, the answer is likely streams of code, glowing blue brains or white robots with men in suits. An outdated trope set by the popular culture of the 80s and 90s.

These misleading representations are used for everything from news stories and advertising to personal blogs. These stereotypes can negatively impact public perceptions of AI by giving people unrealistic expectations of technologies.

Flashforward to 2023, and AI is a set of invisible background processes powering experiences we interact with daily – to more overt interactions like the rise in AI-powered chatbots. A far cry from the dystopian future showcased to us via Hollywood.

Energy Efficiency by Linus Zoll

We are, however, still in the early days of how AI can be applied to systems at scale, and to ensure the technology is responsibly developed and deployed, discussion around it needs to be as accessible as possible.

Visualising AI is an initiative that aims to open up those conversations and make them accessible through imagery and stories.

At Google DeepMind, we have commissioned visual artists, illustrators and designers from around the world, inviting them into discussions with researchers, engineers, ethicists and other domain experts.

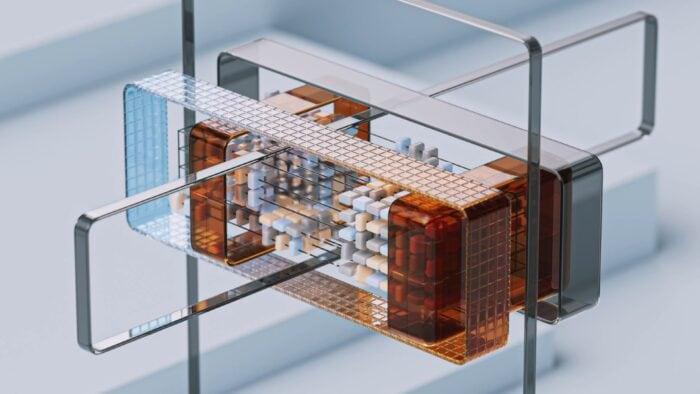

Large Models By Wes Cockx

Each release of imagery and animation tackles topics making headlines and at the top of mind for the industry, such as Generative AI and multi-modal models, assistive AI for productivity and creativity, how models are trained and how they might propel industries such as energy and life sciences forward.

Reinforcement Learning by Vincent Schwenk

The artists can create visual representations of everything they’ve heard and researched from those discussions. This curatorial approach has led to unconventional and challenging interpretations of AI from their unique perspective.

As the collection grows, we hope to invite more and more artists to tackle new and emerging themes.

Examples from participating artists

Image Models by Linus Zoll

Linus Zoll explored the creation of AI-generated images using text-to-image diffusion models trained on vast amounts of pictures. His approach was to illustrate how AI can transform a blank canvas into a finished piece of art in an entirely automated manner. To convey the image data the model relies on, he used cubes with different materialities that are sorted and reorganised until they form a visual of a surreal landscape.

Creative Collaboration by XK Studio

XK Studio explored how creative collaboration through tools like generative AI opens up new opportunities for human-AI collaboration. The synergy between the technology and their creative process is almost magical for XK. They wanted to poetically depict how artists can creatively collaborate with AI systems to offer new points of view, speed up processes and lead to new territories whilst they, as creatives, always remain the driving force behind it.

Large Language Models by Wes Cockx

Wes Cockx visualised Large Language Models, drawing inspiration from models like Google’s PaLM and OpenAI’s GPT. Starting with a prompt that inquires about the workings of large models, the output is presented across shapes that evoke products users interact with regularly. The neural network traverses over scattered shards and identifies statistical patterns and correlations among words and phrases.

Digital Assistants by Martina Stiftinger

Martina Stiftinger explored how AI is used as an assistive technology to improve productivity and enhance our work. Martina created a series of stories that visualises a journey around the supporting and time-saving aspect of AI-powered tools – simplifying the process to focus time on what’s essential.

AI and Society by Novoto Studio

The team at Novoto Studio explored how AI and society are transforming together. Areas of research seek to understand the impact of AI on individuals and society and how they can harness the benefits through AI tools and mitigate risks through equitable development.

Data Labelling by Ariel Lu

Ariel Lu explored human involvement in the creation of AI systems, noting that an incredible amount of human decisions and subjective choices are involved in the design process of AI. Data labelling makes data usable to train AI, but the human labour behind it is often undervalued. Ariel sheds some light on the fact that AI is human-centred in multiple complex layers by fusing natural and artificial textures and materials.

Biodiversity by Nidia Dias

Biodiversity describes the breadth of life on Earth. Using AI, researchers can better understand, track and ultimately find ways to protect plants, animals and ecosystems. The concept behind Nidia Dias’s work is to illustrate the speed and efficiency with which AI can identify species within an environment. Nidia designed an abstract landscape with ample diversity to depict this, indicating various species symbolising biodiversity.

Making images available to everyone

While we have compensated artists for their brilliant work, we decided to open-source everything to ensure the artwork can begin to shift the needle on public perception and understanding of AI.

Making it freely available with our partners, Unsplash and Pexels, has led to over 100M views and 850K downloads. Media outlets, research and civil society organisations have all picked up the imagery.

AI can transform our world for the better. Diversifying how we visualise these emerging technologies is the first step to expanding the wider public’s vision of what AI can look like today and become tomorrow.

Written by Ross West and Gaby Pearl.

Ross and Gaby are London-based visual designers at Google DeepMind, an artificial intelligence lab, where they help visualise AI research and breakthroughs.