Editor’s Note: Looking to create your first VR project? Considering the Unreal engine for development? Then read the following guest post from Firstborn very carefully.

Written by Bruno Ferrari, Senior Motion Graphic Artist; Jay Harwood, CG Supervisor; and Ron White, Lead 3D Artist.

Over the last three years, we’ve had the pleasure of producing some of the first branded VR experiences to hit the market. With each new project, we’ve encountered crazy challenges that put our backs against deadlines and had us asking, “What the f*ck have we gotten ourselves into?”

Whether stitching and color correcting live-action, rendering massive amounts of masks and utilities for pre-rendered 3D or figuring out how to track and combine both for the same experience, we always came away with tips and tricks to tackle the next project.

Nothing, however, could prepare us for the move from passive to interactive VR experiences.

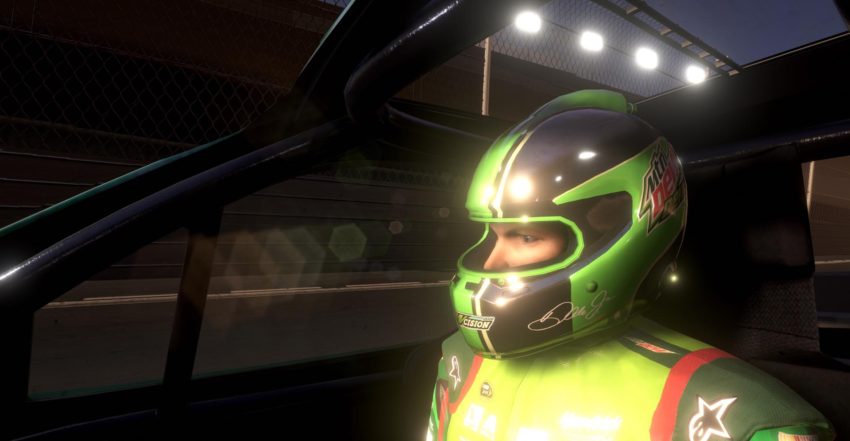

For our fourth VR installment for Mountain Dew, we had to create an experience that blurred the lines of gaming and marketing by offering full immersion and interactivity powered by the Unreal game engine. Unlike video, where the user is only a viewer with one point of direction, the engine lets the user move anywhere, interact with the environment and have the environment respond back.

Racing legend Dale Earnhardt Jr

When viewers put on the VR goggles they are transported to an immersive racing world where they start behind the wheel of a NASCAR stock car with Dale Earnhardt Jr. as their co-pilot and then proceed to choose their own driving adventure down the racetrack of their choice.

It was a bear of a project, but we learned a lot along the way – and we feel it only fair to share some of these growing pains with others experimenting in Unreal for VR.

1. Deepen Your Bench

Although our team consisted of avid gamers, none of us had ever worked in a gaming engine. This new space turned out to be much further from our regular motion graphics/VFX expertise because we now had to create triggers to drive movement — and then bring that movement to life with 3D.

With VR projects, don’t underestimate the amount of work you are about to undertake. If you think you need one artist, you’ll probably need four.

In anticipation of the project, one of our 3D artists entrenched himself in the engine. During his sabbatical, he spent time outside of the office learning C++ and taking a class on udemy.

Intimidated by coding? He also relied on the Blueprint visual scripting system, a node-based programming system for Unreal that’s visual than hand-crafting thousands of lines of code. In addition, YouTube and the Unreal forums were a huge resource, as the community on both is very active and supportive.

We also brought on a triple-A game developer who had experience working on best-selling game franchises to join the team.

We’ve learned that with all VR projects, make sure you don’t underestimate the amount of work you are about to undertake. If you think you need one artist, you’ll probably need four.

2. Forget Traditional Motion Graphics Techniques

This was our first project using Unreal, so we had about three weeks of R&D built into the three-month production schedule.

During this phase, we continued to iterate on the original script and story arcs to make sure we were able to achieve our vision within the confines of the platform. For instance, we tried applying traditional motion graphic techniques like adding QuickTime movies and transparencies to cards, but ended up getting errors, as it is still something being developed.

We also had to work more cleanly because there is less flexibility to swap out visuals. We hit another roadblock when the camera had trouble determining what was in the foreground and the background, which led to undesired effects.

We tried mapping images to the camera, which would work in a normal video game, but the eye point and camera point would not connect in VR, so it was still distorted. We ended up creating a custom heads-up display that was inside the car, and then mapped those visuals physically onto planes inside the car.

Pre-production has to be extensive so you can ideally iron out all of these issues to have the final assets locked before development begins.

3. Brace for Bottlenecks

We started off using git, a version control system, with Bitbucket — but quickly reached the limits of the platform.

As a result, there were a lot of bottlenecks in our workflow because of the time it took to push and pull files. We also needed to police the code so that artists wouldn’t break anything accidentally.

With each new input, we had to account for refactoring to make sure the environment was completely stable before moving forward. We decided to limit asset integration to just our developers, but that meant we had a team of 7-8 artists creating work simultaneously that could not be immediately uploaded and tested. We needed a hard pencils down date to account for these delays.

On set with Dale Earnhardt, Jr

Exporting the experience for non-interactive linear video outputs like GearVR, YouTube and Facebook 360 can be very time consuming, as it takes 16 hours for one machine to render 30 seconds of the experience.

You want to make sure your experience is getting the largest distribution as possible, so we recommend bolstering your process by investing in GTX 980 graphic cards or the latest release of 1080s and building in enough time for these long exports.

4. Know your Audience

The experience you create should cater to your entire target audience. You have to understand that they will each have varying degrees of familiarity with VR, and you’ll likely have some VR virgins.

A successful VR experience should prompt any viewer to explore and take in the entire world, not just one set point of view. We took great care in our storytelling tactics to make sure there were varying key action points to grab the user’s attention.

For example, we integrated ‘bullet time,’ where all of the movement is slowed down so the driver is encouraged to fully embrace the 360-degree environment at the most exciting moments — from a whale jumping overhead to an avalanche of boulders.

We also had our motion-captured celebrity drivers act as co-pilots that prompted directions, telling the driver to “look up” or “watch out” for oncoming traffic.

Co-pilot Chase Elliott

Key takeaways

Although you can go really big with your production hardware, you also need to take into account the headset and method that your audience will be using to view the experience.

The richest experiences have amazing visuals, but require massive CPU and GPU power to drive them, which is not typical of what most people have in their homes yet. Lucky for us, we shared this experience with the Oculus Rift headset at events and had full control over the hardware, but if the end product was to reach more consumer-level computers, then we might have rethought our need for particles, optical flares and final video effects.

You can’t fit the old way of doing things into this new engine.

Ultimately, when creating an experience with Unreal you have to be prepared to change the way you work. This means a different cast of characters and a completely different workflow. You need to be flexible, because you can’t fit the old way of doing things into this new engine.

Oh yeah, and don’t be cheap when it comes to investing in expensive graphic cards — they will pay off!

Credits

Creative Director: Cameron Templeton

Senior Copywriter: Sam Isenstein

Co-Directors: Bruno Ferrari & Jay Harwood

VP, Content Development: Seth Tabor

Head of Production: Will Russell

Executive Producer: Chris Grey

Program Director: Katie Leo

Producers: Ezequiel Asnaghi, Erik Gullstrand, William Russell

Technical Director: Ron White

Concept Art: Yun Chen, Bruno Ferrari, Jay Harwood

VFX: Mike Bourbeau

3D Artists: Jay Harwood, Zed Bennett, Mike Bourbeau, Yun Chen, Anthony Patti

Character Animation: Mike Bourbeau, Kevin Scott

Rigging: Mike Bourbeau

Unreal Artists: Ron White, Mike Bourbeau

Unreal Developers: Matthew Modaff, Jeffrey Soldan

Sound Design: Dan Dzula

Music: Gene Back

Motion Capture: MotionCaptureNYC